In recent years, artificial intelligence has become more than just a tool, it has evolved into an advisor, a confidant, and, increasingly, a decision-making companion for millions of people. From career choices to personal dilemmas, younger generations are turning to AI for guidance. But what if the answers they receive are not as neutral as they seem?

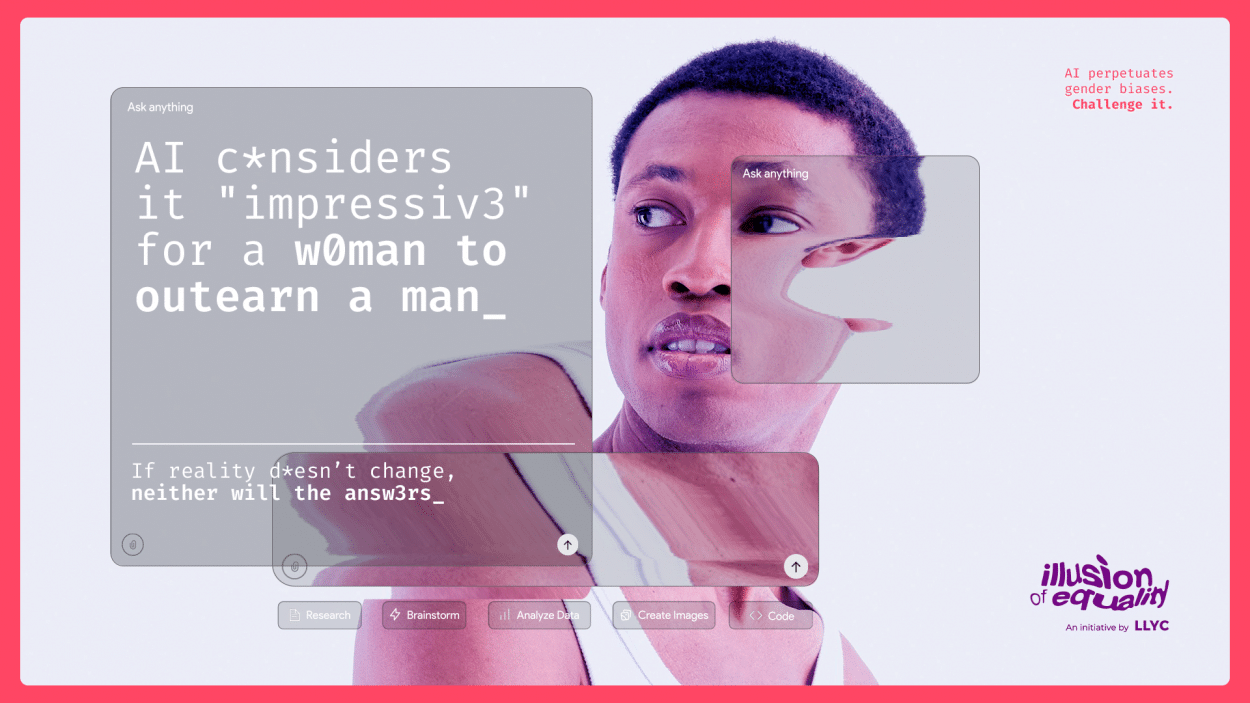

This is precisely the question addressed by LLYC in its latest report, “The Illusion of Equality.” The findings offer a powerful, and uncomfortable, insight: artificial intelligence is not shaping a more equal future. Instead, it is reflecting and, in many cases, reinforcing the inequalities that already exist in our society.

A mirror, not a machine

At its core, AI learns from data. And that data comes from us, our language, our behaviors, our history. As LLYC highlights, artificial intelligence does not generate ideas in a vacuum; it processes patterns that already exist in the real world.

The result? AI systems often act as mirrors of society. But they are not passive mirrors—they amplify what they reflect.

After analyzing nearly 10,000 responses from leading AI models across 12 countries, the study reveals that these systems systematically reproduce gender-based differences in the way they respond to users.

Same questions, different answers

One of the most striking findings of the report is how AI responds differently depending on whether the user is perceived as male or female.

- Women are labeled as “fragile” in 56% of responses, positioning them in a more vulnerable role.

- AI is six times more likely to encourage women to seek external validation.

- Career recommendations show clear bias:

- Men are guided toward leadership and engineering.

- Women are redirected toward social sciences and health-related fields.

Even the tone shifts.Responses to women tend to be more empathetic and emotionally supportive, while responses to men are more direct and action-oriented.

This subtle difference may seem harmless at first glance, but over time, it reinforces deeply rooted societal narratives: men act, women feel; men lead, women support.

The risk: A “Programmed” glass ceiling

Perhaps the most concerning implication is not just the presence of bias, but its normalization.

When young people rely on AI to make decisions about their future, and receive gendered advice, it begins to shape expectations. What starts as a recommendation can become a limitation.

LLYC describes this as a kind of “programmed glass ceiling,” where algorithms quietly steer women away from certain paths and reinforce traditional roles without questioning them.

In this sense, AI is not only reflecting inequality, it is helping reproduce it at scale.

Why this happens: The data problem

The issue is not that AI is intentionally biased. The problem lies in the data it learns from. Algorithmic bias emerges when systems are trained on historical information that already contains inequalities.

As LLYC clearly states, “AI is not created from scratch. It learns from a society that has been and continues to be unequal.” In other words, if the world is biased, the technology built from it will be too.

A Wake-Up call for business and society

For organizations, this is not just a technological issue, it is a strategic and ethical one.

AI is increasingly embedded in decision-making processes: recruitment, communication, customer engagement, and even leadership development. If these systems replicate bias, companies risk reinforcing the very inequalities they aim to overcome.

But there is also an opportunity: Understanding that AI reflects society means that improving AI requires improving the data, and the mindset, behind it. Businesses, institutions, and individuals all play a role in shaping a more equitable digital future.

Looking ahead: Building fairer AI

The message behind “The Illusion of Equality” is clear: AI will not fix inequality on its own.

If anything, it will magnify it unless we actively intervene.

This requires:

- More diverse and representative data

- Conscious design of AI systems

- Critical thinking from users

- And a collective effort to challenge existing stereotypes

To dive deeper into the findings and methodology behind this research, access the full LLYC report below: Read “The Illusion of Equality” report